Separating the mind from body….

Today’s tech leaders envision separating mind from body in AI-driven computers.

They are also developing a quasi-religious hope in the so-called “Metaverse,” where AI determines what we see, hear, learn, and love.

A rapidly growing number of people now earnestly wonder: What will AI do to society? The fear has gained ground as AI has developed, and machines are now increasingly demonstrating the ability to execute complex tasks without human interaction or direction.

By Nazarul Islam

Imagine yourself being inside the high tech lab, somewhere in Silicon Valley, filled with robots being trained in Artificial Intelligence (AI). One of the robot’s eyes open, as if it’s waking up for the first time. It looks around, confusion and wonder in its lifelike eyes. It brings a hand up to its face, giving a glance that says it’s never seen itself before. The robot’s name is ‘Zoya’, or give it any name.

Technology leaders of our world met it in a video released late last month. When Elon Musk saw a similar robot made by the same company a few days later, he declared: “Real androids are coming.”

A longtime critic of artificial intelligence, Musk likes to speak for the countless people who fear a future defined by this beyond-cutting-edge technology.

That fear has been lurking around for more than 50 years, when Stanley Kubrick depicted AI as a mortal threat to mankind in 2001: A Space Odyssey. (Similar anxieties can be seen earlier, in Mary Shelley’s Frankenstein.) It has gained ground as AI has developed, and machines are now increasingly demonstrating the ability to create and execute complex tasks without human interaction or direction — the definition of artificial intelligence.

A rapidly growing number of people now earnestly wonder: What will AI do to society?

It’s an evocative, important question. But the answer will depend on the answer to a more important question altogether: Who is building AI? Artificial intelligence will do great evil or great good depending on the beliefs of those who make it. And right now, it is primarily being built by tech leaders with little to no understanding of or respect for the morality, virtues, and wisdom of the Judeo-Christian tradition.

Like it or not, the greatest threat with AI isn’t all-powerful robots. It’s amoral people.

The threat of artificial intelligence is increasingly unmistakable. It could be used to exploit people economically, subverting our God-given call to work. It could be applied in ways that censor free speech, stifling the voices that God has granted us. It could replace human sensibility and compassion with robotic expediency, suffocating the rational soul that reflects God’s image. There are already AI-driven robots capable of reproducing; will they and their spawn inherit the earth?

More than likely, such possibilities have led many to predict that artificial intelligence will end life as we know it. Elon Musk has said that adopting AI is like “summoning the devil.” Stephen Hawking declared that it may “replace humans altogether.” And many other scientific and social leaders have spoken in the same vein, saying that AI is among “the new tools of oppression” and likening it to “children playing with a bomb.” Hardly a day goes by without another apocalyptic prediction.

But this vision of artificial intelligence is far too pessimistic. As with all technology, artificial intelligence is the creation of people — thinking beings who can order AI according to certain principles, if we so choose. We can design it to respect and augment reason, empower and improve the productivity of workers, transform how we protect and uplift the most vulnerable among us, and generally serve humanity instead of making servants of us.

Let us understand that AI has incredible potential to free us from much drudgery, help cure disease, and create a healthier, wealthier, and happier world.

Ensuring that artificial intelligence serves people is not simple. It requires tech leaders with properly formed consciences — men and women grounded in virtue, who understand what humanity is and what human beings need. Put another way, good AI depends on morally good AI creators. If we hope to maintain it as a tool and ourselves as its master, not the other way around, those who build this technology must understand right, wrong, and the foundational principles on which human flourishing depends.

Yet the opposite is happening right now.

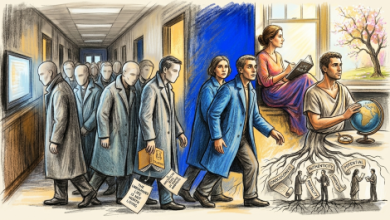

The tech elite possess extraordinary technical education, yet they are extraordinarily uneducated in history, philosophy, literature, and theology. As I saw building tech businesses in both Silicon Valley and Seattle, tech leaders weren’t trained in the liberal arts. They weren’t taught to think critically about tough ethical questions. And they weren’t even instructed in how properly to accept the concept of truth instead, preferring a vague spirituality.

Many have fancied themselves freethinkers and yet, cut off from the philosophical foundations of freedom, their thought is profoundly constrained and uniform. Perhaps their consciences are generally not properly formed.

Hence, the result is what C. S. Lewis described in the Abolition of Man: “We castrate and bid the geldings be fruitful.” Freed from any concept of Judeo-Christian morality, today’s tech leaders envision separating mind from body in AI-driven computers. They are also developing a quasi-religious hope in the so-called “metaverse,” where AI determines what we see, hear, learn, and love.

The big question is: Where will Silicon Valley stop? Nowhere, because nothing eternal or ethical is holding the tech elite back from doing, designing, and destroying whatever they please, using the unsurpassed power of artificial intelligence. The zeitgeist is their conscience, and if it tells them to do something, they will comply.

If we reach a future where artificial intelligence overruns and rules us, the blame will lay with the human intelligence that thought such a future was not just possible but worth pursuing.

AI is already rushing down that dangerous road, driven by people who see no error in their ways or ethical barriers blocking their path. Their elitism and amorality make it easier to envision a day when AI does the terrible things we only talk about now.

I believe strongly that a powerful tool cannot be left in these hands, and to society’s credit, calls are growing to rein in AI before it’s too late. To date, most commentators have emphasized the need for principles to guide AI’s development, but that presupposes the existence of people who are capable of creating and implementing such a framework. Those of us who are grounded in Judeo-Christian principles must design AI accordingly.

That means innovation, entrepreneurship, and, ultimately, building AI that reflects and is restrained by a time-tested moral code. Isaac Asimov’s “Three Laws of Robotics” are a useful starting point, but they aim only to prevent artificial intelligence from physically harming human beings.

Obviously, there is a bigger need for a broader definition of harm, which includes the emotional, economic, and ethical damage that AI could do if not properly guided. Creating and implementing that definition must be the work of morally trained people.

Our goal should be nothing less than an AI “Magna Carta.” Such a framework would firmly establish the primacy of humanity vis-à-vis artificial intelligence and establish enumerated rights that AI and its developers cannot violate. The list must include speech, privacy, and, broadly speaking, life, liberty, and the pursuit of happiness.

Moreover, reflecting the demands of basic morality, such a Magna Carta should restrict AI from injuring the family, enabling immoral activity like lying, pushing people toward dependency, promoting pornography and human trafficking, and becoming a weapon of war.

Yet for any of this to work, principled, ethical individuals with well-formed consciences must be in the driver’s seat of AI’s development, which they currently are not. Nor is it hopeful to see the Biden administration developing an “AI Bill of Rights,” since the White House is run by ideologically minded people who are nearly as radicalized as Silicon Valley.

Either moral people will harness artificial intelligence’s tremendous potential for good or amoral people will continue to develop this technology for increasingly evil ends. Those who are blessed with a strong moral foundation must accept the burden to shape AI for the better. And, shouldn’t we do it sooner than later?